Earlier this month, the Apache Hop PMC and community released Apache Hop 1.2.0.

Apache Hop 2.1.0 is available

Bart Maertens

The Apache Hop team just released version 2.1.0.

This new release is the result of four and a half months of work on over 200 tickets and comes packed with new functionality, bug fixes and improvements.

Let's see what you'll find in Apache Hop 2.1.0.

Apache Beam

Running Apache Hop pipelines have been supported on Apache Beam run configurations for Apache Spark, Apache Flink and Google Dataflow since the very early Apache Hop days.

Running Apache Hop pipelines have been supported on Apache Beam run configurations for Apache Spark, Apache Flink and Google Dataflow since the very early Apache Hop days.

As with every release, Apache Hop is upgraded to support the latest version of Apache Beam and the supported engines available. Hop 2.1.0 comes with Apache Beam 2.41.0 and support for Apache Spark 3.3.0 and Apache Flink 1.15.2.

With this Beam version upgrade, a number of changes in the Hop internals were made to significantly speed up your Apache Hop pipelines on Spark, Flink or Dataflow.

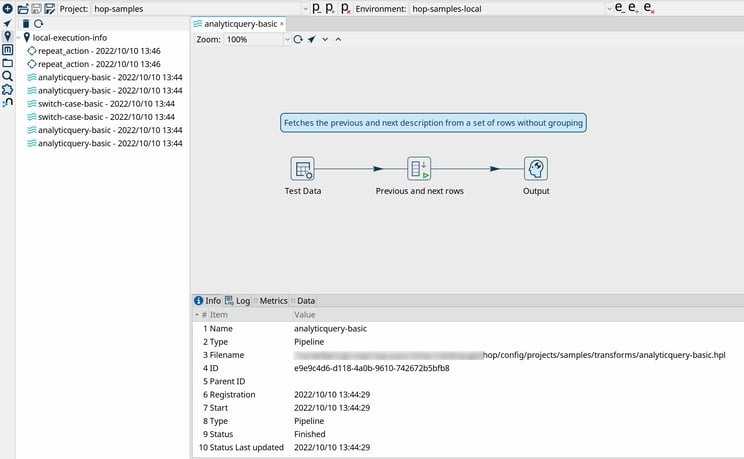

Execution Information Framework and Data Profiling

A crucial aspect of monitoring and troubleshooting any data project is finding out what happened during your workflow and pipeline execution. This has always been possible in earlier versions of Hop, but there was room for improvement in the way information was stored and presented.

The new execution information and data profiling framework, by far one of the most significant areas of new functionality in Apache Hop 2.1.0, is the first iteration in a framework that aims to provide better insights in all areas of your workflow and pipeline execution.

Hop users can configure where and how execution information is stored, currently with support for the local file system, a remote Hop Server and a Neo4j graph database.

Alongside the pure execution information, a data profiling framework can be configured to profile the data that flows through a pipeline, or to sample the first, last or a random set of rows.

A new Execution Information perspective provides an overview of the ongoing and previous executions of your workflows and pipelines, with the ability to drill up to or down from the parent or child workflow or pipeline.

All workflow and pipeline engines can be configured to gather and store execution information. Data profiling is only available to pipelines.

Kubernetes

With an increasing number of Apache Hop deployments in Kubernetes environments, the Apache Hop community added two Helm charts to Apache Hop 2.1.0.

In Kubernetes, Helm is considered to be the package manager, with Helm Charts being the actual packages. Hop 2.1.0 comes with Helm Charts for Hop Server and Hop Web.

New plugins

The number of plugins in Apache Hop grows with every release, and 2.1.0 is no exception.

The new transforms are:- Execution Information: lets Hop users read data from a previous workflow or pipeline execution location

- Microsoft Access Output transform lets you write data to Microsoft Access databases. Even though MS Access is not the most advanced data platform, it's still an indispensable data format in a lot of organizations.

- Snowflake Bulk Loader transform lets you bulk upload data to your Snowflake analytical cloud databases.

Apache Hive was missing from the supported database types in Apache Hop. This is now fixed.

Community

As an Apache project, the community is key for Apache Hop. The Apache Hop community continues to grow in the number of community members, geographical areas, and contributions.

Great communities build great software, a huge thank you and shoutout to everyone who was involved to make Apache Hop 2.1.0 the big release it is.

Apahe Hop and know.bi

At know.bi, we were involved with Apache Hop since its inception. We are active and proud community members and contributors and will continue to do so. Get in touch if you'd like to find out more about how Apache Hop can help you solve your data engineering and data orchestration challenges.

Blog comments