Another two months after the 2.8.0 release, the Apache Hop community is proud to announce the...

know.bi blog

building rock-solid data platforms, one step at a time

The Apache Hop community released Apache Hop 2.8.0 late last week. This release contains over three...

The Apache Hop community released version 2.7.0 earlier this month. Let's take a closer look at...

After three months of work on 65 tickets, the Apache Hop community released Apache Hop 2.6.0...

Apache Hop 2.5.0 was released late last week. 2.5.0 is the latest in a series of mostly hardening...

The Apache Beam project released 2.48.0 just in time to be included in the upcoming Apache Hop...

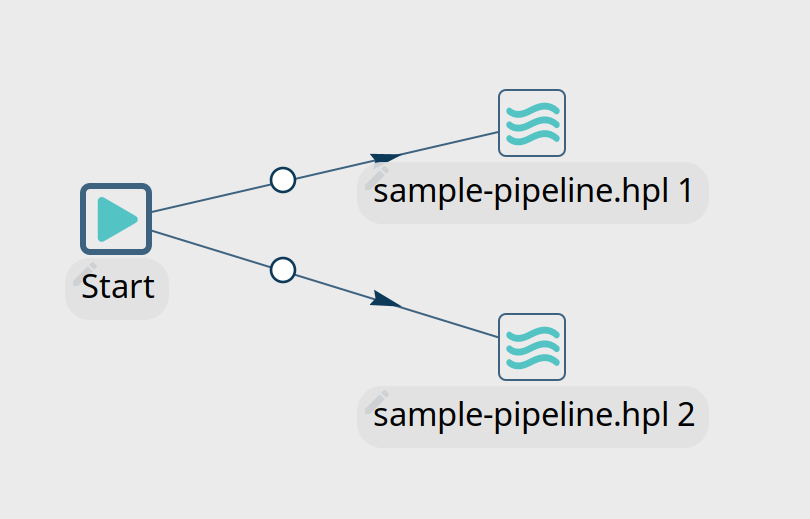

A number of questions have been raised in the Apache Hop chat about running workflows and pipelines...

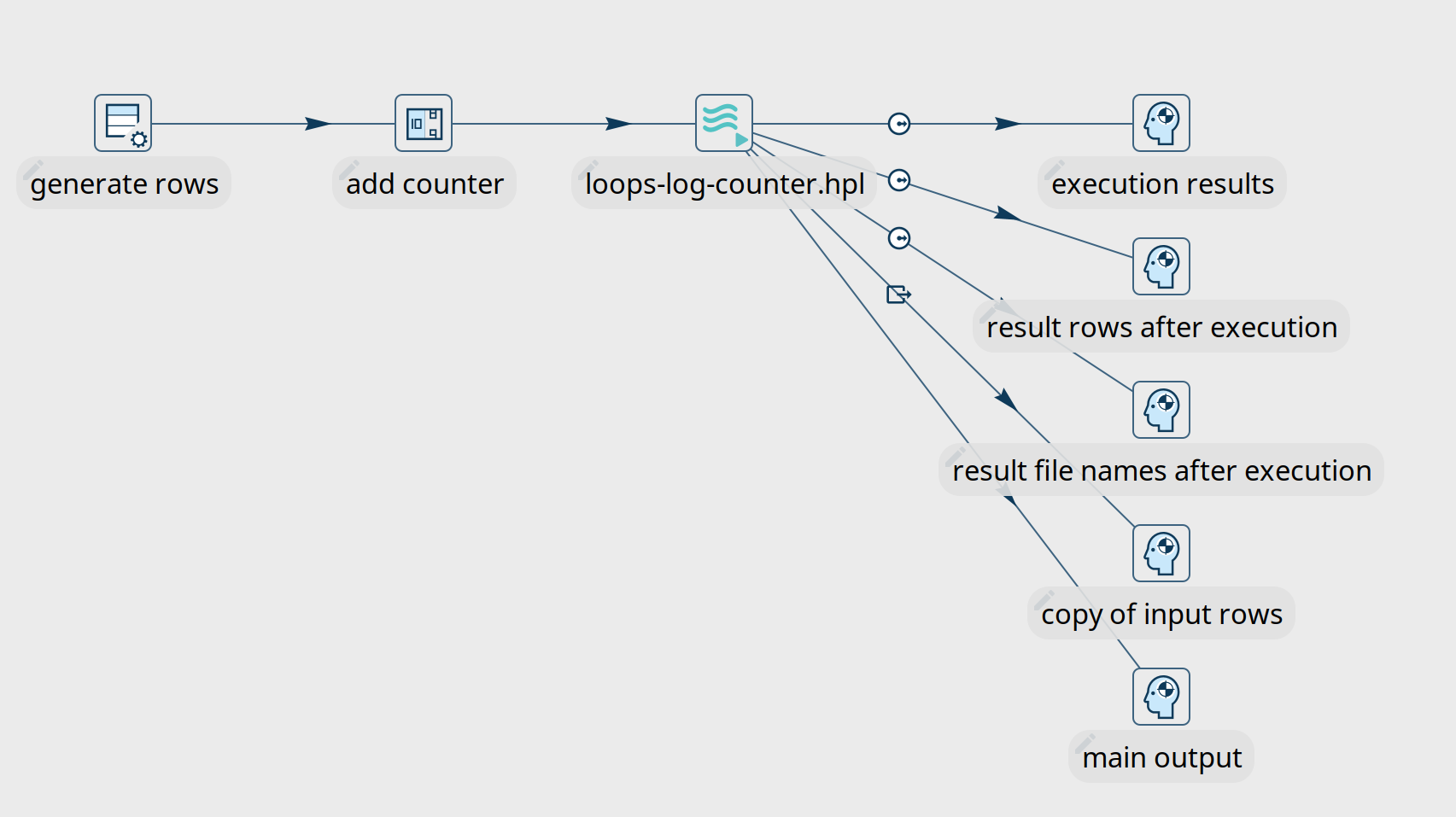

One of the first concepts new Apache Hop users learn is that pipelines are executed in parallel and...

In any data engineering project, there are lots of use cases where you'll want the same process to...