APACHE HOP

Build high-quality data pipelines without writing a single line of code.

Apache Hop

Apache Hop is an innovative open source, metadata-driven data engineering and data orchestration platform that lets you visually describe your data pipelines and workflows.

Apache Hop is not just a low-code or no-code platform: it is a visual development platform that enables citizen developers to be as productive with data as possible.

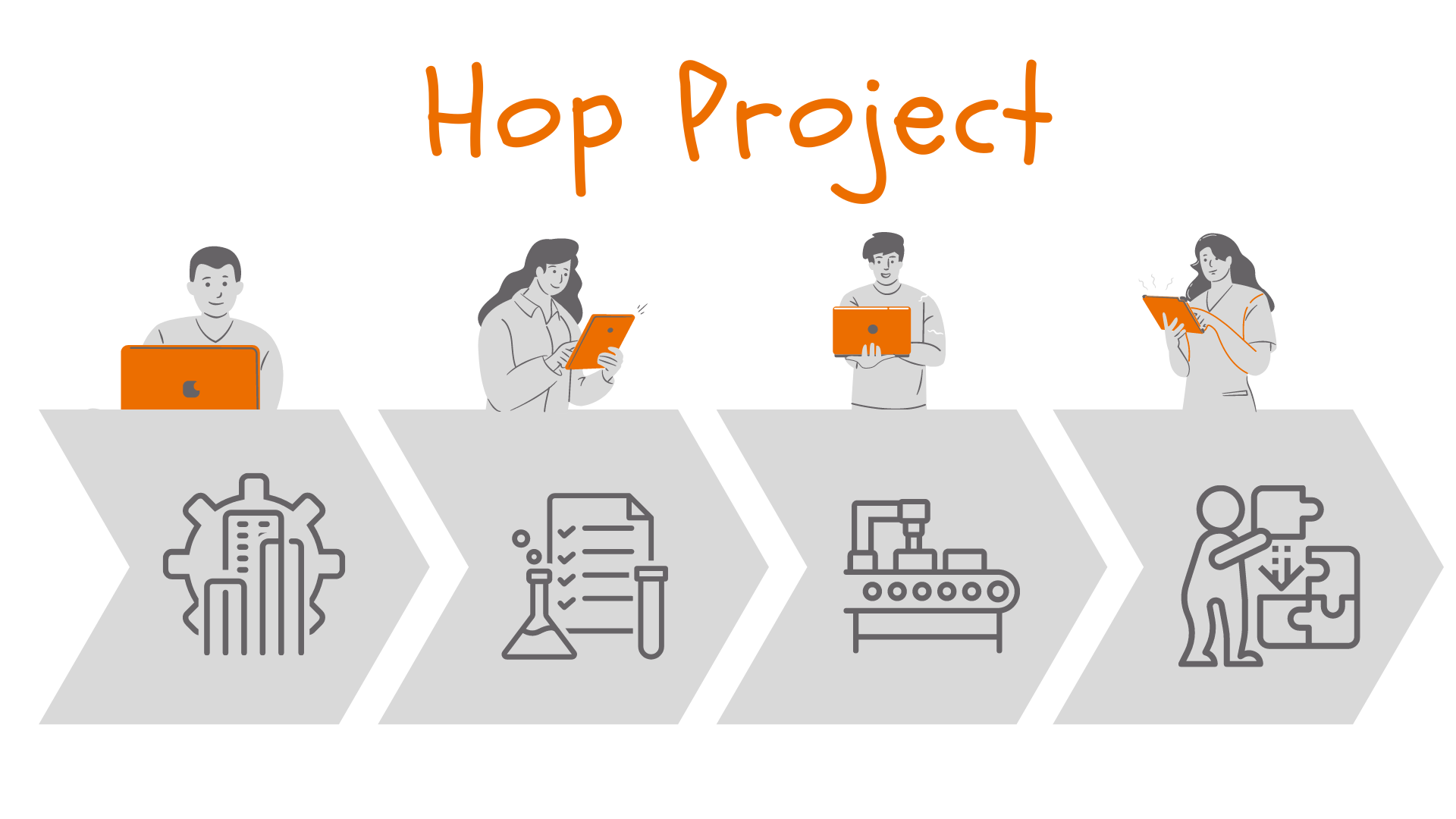

Pipelines and workflows in Apache are developed visually. Just like standard source code, they are version controlled, have unit tests and can be integrated into your entire DevOps and CI/CD environment.

Pluggable runtimes allow you to design pipelines once and run them in the environment where they fit best: in the native engine for data volumes that can be processed on a single machine. If your data volumes are too big to run on a single machine, Apache Hop pipelines can run on Apache Spark, Apache Flink or Google Dataflow through the Apache Beam runtimes.

Apache Hop pipelines and workflows grow with your organization: design once, run anywhere!

Apache Hop's life cycle support allows you to work in project and deployment environments, with git integration, unit testing, execution information and much more.

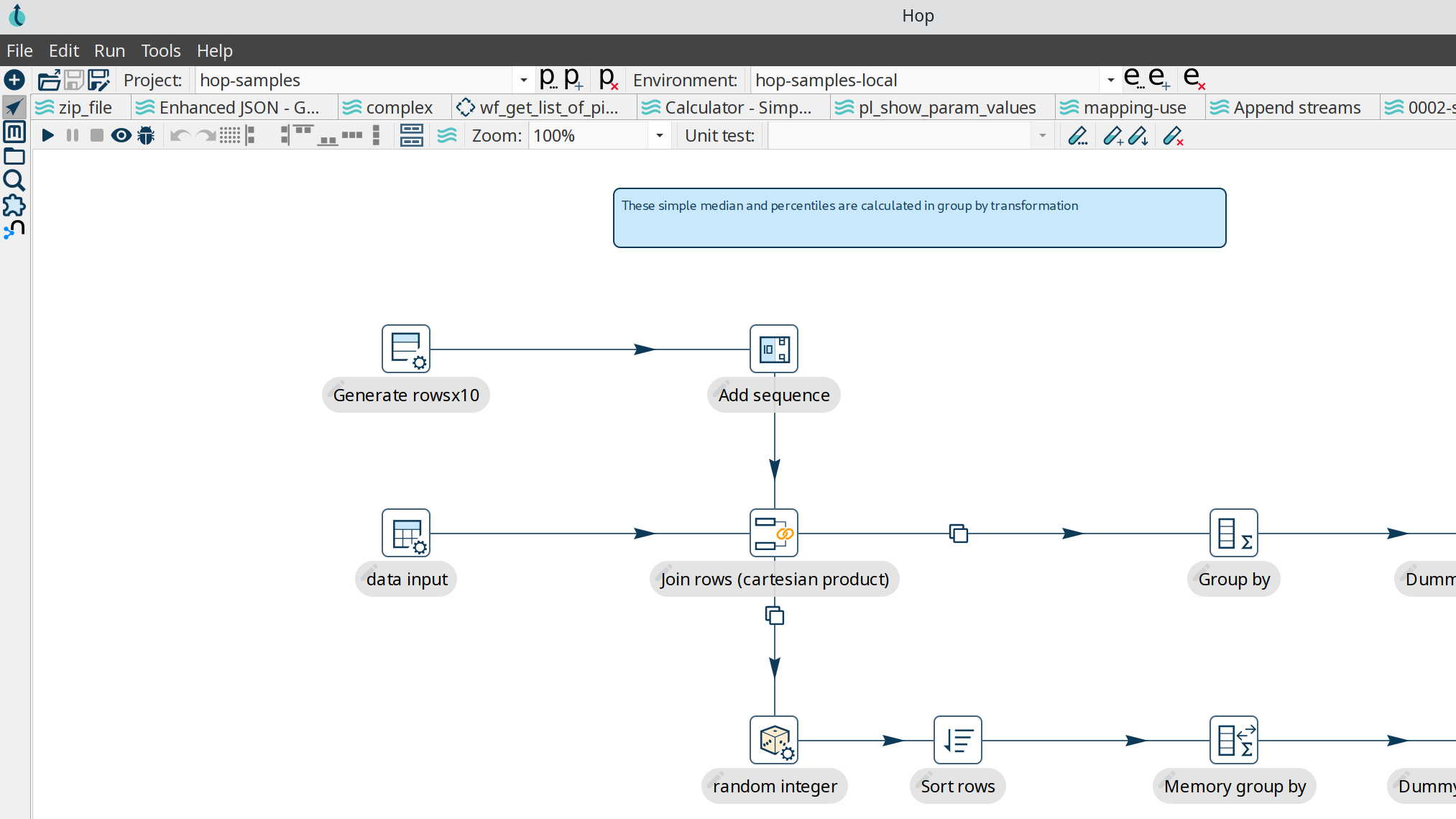

Visual Development

Apache Hop enables non-technical people of all technical skill levels to be productive with data without the need to write code.

Data processes need to be easy to design, easy to test, easy to run, and easy to deploy. Visually designing your data processes greatly enhances the maintainability of your data projects and the productivity of your data teams.

- develop data pipelines without writing a single line of code

- unlock hundreds of data sources

- clean, transform and enrich your data

- obtain real-time insights based on consistent and correct information.

- turn data into useful information.

Although visually designed, all our work items can be managed just like any other software project. Version control, unit testing, CI/CD and documentation are all first-class citizens in the Apache Hop platform.

True Open Source

Open source is the only way to build modern and innovative software. Open standards, a pluggable architecture and a community that cooperates across the boundaries of companies or organizations are crucial to build a platform that helps you in solving today's data problems.

The global communities, united around improving software solutions and data problems, introduce new concepts and capabilities faster and more efficiently. Full visibility into the code base, open discussions about how features are developed and bugs are fixed helps the platform to release early and evolve quickly.

As an Apache top-level project. No single company owns Apache Hop or drives its development. The source code is and always will be available for free and in the public domain.

.svg.png)

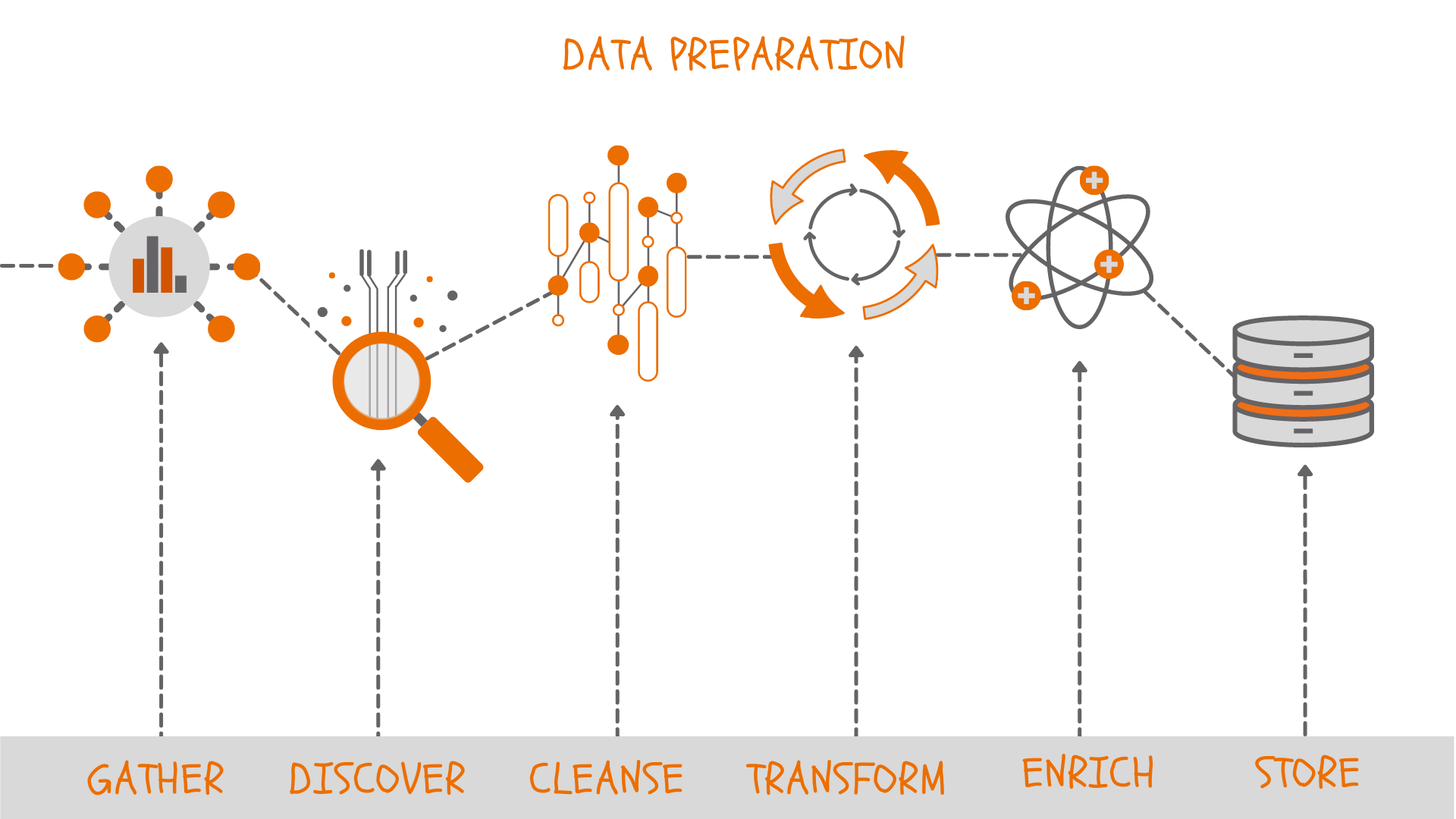

Data Preparation and Data Governance

It's not just a data science issue, 80% of the time in data projects in general is spent on data preparation. Data comes from a wide variety of sources, often with widely varying quality. A lot of data preparation and data cleanup is required to get your data in a state that allows analyzing.

Managing data throughout its entire life cycle matters more than ever. Apache Hop not only helps you to prepare data before it can be analyzed but also keeps track of your data's entire life cycle.

Hop already allows you to track data lineage in graph databases, but there's more to come. Upcoming integrations with metadata, data lineage and data governance platforms will allow you to track every single data point in your organization, from its inception until it is put to rest.

Metadata Driven

Metadata is at the core of the Apache Hop architecture: the metadata perspective in Hop GUI lets you manage (relational and NoSQL) database connections, run configuration for workflows and pipelines, logging and lots of other metadata types.

Apache Hop implements a strict separation of data and metadata, which allows you to design data processes regardless of the data itself. Every object type in Hop describes how data is read, transformed or written, or how workflows and pipelines need to be orchestrated.

Metadata is wat drives Apache Hop internally as well. Hop uses a kernel architecture with a robust engine. Plugins add functionality to the engine through their own metadata.

The Apache Hop plugins can define their own metadata object types so depending on the installed plugins you can find extra types.

Runtime Agnostic

Design once, run anywhere. Apache Hop lets you design data processes and run them where it makes most sense. A data pipeline designed and tested on your local laptop can later be deployed to a remote server, or an Apache Spark, Apache Flink, or Google Dataflow cluster through Apache Beam.

The pipeline and workflow run configurations are metadata objects that decouple the design and execution phases of Apache Hop pipeline development. Think of a pipeline as a definition of how data is processed, with run configurations as a definition of where the pipeline runs.

Apache Hop comes supports a number of different runtime engines:

- Beam DataFlow pipeline engine: runs pipelines on Google DataFlow over Apache Beam.

- Beam Direct pipeline engine: runs pipelines on the direct Beam runner (mainly for testing purposes).

- Beam Flink pipeline engine: this configuration runs pipelines on Apache Flink over Apache Beam

- Beam Spark pipeline engine: runs pipelines on Apache Spark over Apache Beam.

- Local pipeline engine: runs pipelines locally in the native Hop engine.

- Remote pipeline engine: runs pipelines in the native Hop engine on a remote machine.

Why Pluggable?

A pluggable architecture allows flexibility, extensibility and maintainability. Apache Hop maximizes this by implementing all non-core functionality as plugins.

As a developer, this makes it easy to add new functionality.

As a system administrator, it gives you full control over the functionality you want to allow in your systems.

As a data designer, it gives you full control to pick and choose the functionality you want to use.

Being able to only include the plugins you need makes Hop a perfect fit for your DevOps and CI/CD environments.

Hop is built around an ecosystem of plugins. This gives your data and infrastructure teams the ability to create a custom version of Hop tailored to your exact needs.

Plugins are available for databases (relational and NoSQL like Neo4j, Apache Cassandra and many more), workflow actions, (hundreds of) pipeline transform, scripting languages etc. External plugins let you work with Python, machine learning, geographical data and SAP systems.

Projects & Environments

Most developers who design and manage data processes on a daily basis work on a multitude of projects and modules.

Different sets of workflows and pipelines require management for at least development, acceptance, and production environments.

Every project or environment comes with its own set of variables and configurations for databases, file paths, etc. Through projects and environments, Apache Hop lets you keep a strict separation between code (projects) and configuration (environments).

An active Community

Apache Hop is developed by an open and welcoming community. Everyone is welcome to join the community and contribute to Apache Hop.

There are several ways to interact with the community and to contribute to Apache Hop including asking questions, filing bug reports, proposing new features, joining discussions on the mailing lists, contributing code or documentation, improving the website, or testing release candidates.

know.bi has been heavily involved in the Pentaho community since day 1. We've only stepped up our game with the Apache Hop community. We actively contribute to the platform and will continue to do so.