The Apache Hop (Incubating) project just released version 1.0, the first major release of the...

Project Hop joins the Apache Software Foundation, is now Apache Hop (Incubating)

Bart Maertens

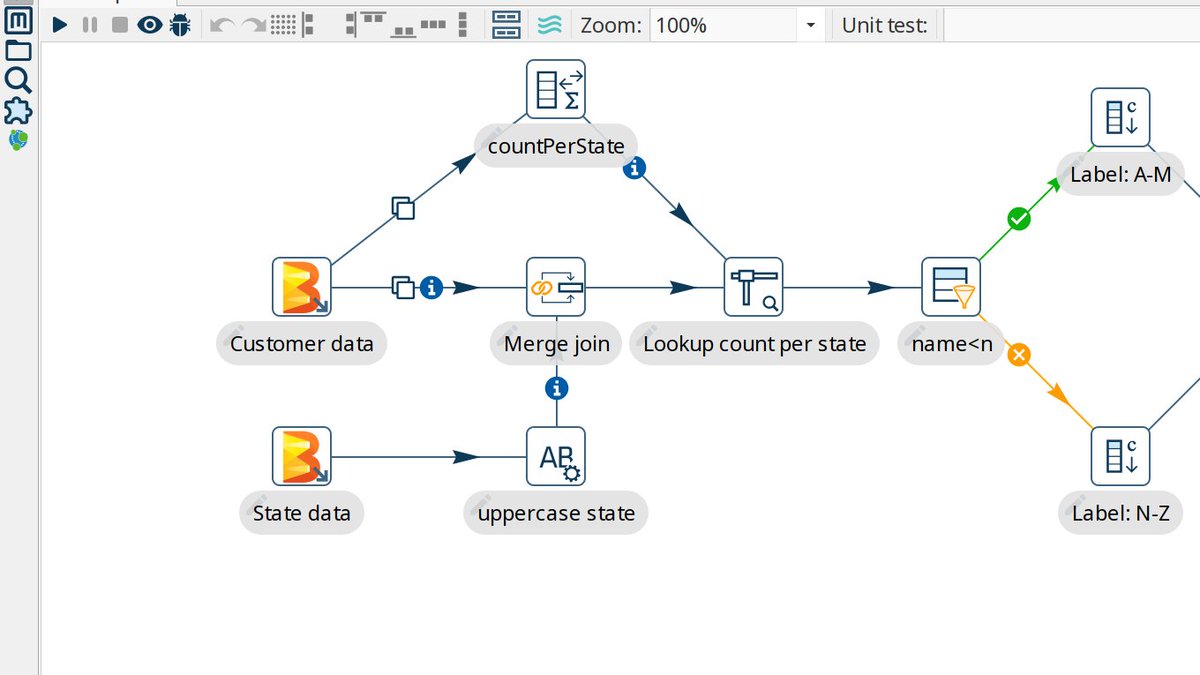

As explained in a previous post, know.bi has been working with Matt Casters (Neo4j) and our growing community of coders, testers, documentation writers and users to build Project Hop as a modern data engineering platform. After about a year of hard work, we've turned what started as a Kettle (Pentaho Data Integration) fork into an entirely new platform. Hop has a lightweight, flexible architecture with support for platforms like Apache Spark, Apache Flink and Google Dataflow (over Apache Beam), Apache Kafka and many more.

We've been very clear about our ambition to work towards donating Project Hop to the Apache Software Foundation and are therefor happy to announce our proposal to enter the Apache Software Foundation as Apache Hop has been submitted and accepted. We joined the ASF's Incubator program, the website, docs and code have been migrated, and Project Hop will from now on be referred to as Apache Hop (Incubating)!

What is the Apache Incubator?

Projects in the Apache Incubator have to adopt "The Apache Way" of working. A couple of key things involved are:

- Apache projects are publicly owned, no companies or organizations own any of the code. Contributors submit code, documentation etc in their own name, not on behalf of their employer (if applicable).

- Apache projects need to work with an open and democratic decision making process, there's no room for "benevolent dictatorship", and community building takes priority over code. All discussions need to happen on the public mailing lists, all decisions need to be taken through voting (on the mailing lists). Voting rounds typically take at least 72 hours before voting is closed, in order to be as inclusive as possible.

- there will be thorough license checks to make sure all code in an Apache project is APL 2.0 or compatibly licensed. Apache software releases are a legal handover of code rather than a technical release, so this is a topic we'll have to spend quite a bit of time on.

In addition to working according to the ASF guidelines, incubating projects (or "podlings") need to do at least one release while in the incubator.

Once all of the boxes have been checked, an incubating project can request to be accepted as a Top Level Project (TLP). The acceptance process, like any other decision in the ASF, is done through votes on the mailing list.

Since Hop adapted to the Apache Way as much as possible since the start, we hope the incubation process will be smooth sailing.

What does this mean for Hop?

By joining the Apache Software Foundation, we hope to significantly increase Hop's reach, visibility, community and contributors.

- visibility: as the central hub for a lot of today's major open source projects and data platforms, Apache projects get a lot of visibility. As an Apache project, Hop will reach a much wider audience than would have been possible as an independent project.

- community: with the increased visibility, we'll work towards growing Hop's community. More members in the Hop community means we'll have more people using, testing, discussing and documenting the Hop platform, which will almost automatically increase Hop's code quality and extend the functionality

- contributions: with more visibility and a larger community, we hope to receive more contributions to the Hop platform. We've already been in discussions with organizations who have been reluctant to contribute to (or even talk about) Pentaho Data Integration in the past, but are now eager to contribute to Hop.

Many organizations consider the "Apache" prefix to be a synonym for robust, reliable and enterprise ready software. As Apache Hop, we'll work hard to make sure Hop joins the ranks of popular data platforms like Spark, Flink, Kafka and Airflow, and becomes another household name in modern data architectures.

What does this mean for know.bi?

We strongly believe Apache Hop is an exciting new direction for know.bi. We started as BI consultants and data engineers with a strong focus on the Pentaho platform. When development in the Pentaho platform came to a standstill, we decided to join forces with Matt and started building Hop. Our goal was not to just build a software platform, we wanted to revive the Pentaho and Kettle community, and extend that existing community to a much larger audience. As a platform and as a community, Hop is a natural transition from a once great platform to what we believe can be a rising star in the data engineering landscape.

We will continue to support customers in their Pentaho projects, but our focus will gradually start to shift towards Apache Hop. Apart from Hop, we'll stick to our focus on a limited number of other technologies (Neo4j, AWS/GCP, Vertica) that we excel in to build the best possible solutions for our customers. It goes without saying these technologies (will) have tight integration with Apache Hop. As know.bi, our goal remains unchanged: we want to add value for our customers through technology we believe.

Once Hop reaches version 1.0 in the not too distant future, we'll start providing migration services from Pentaho/Kettle to Hop.

Since Hop has built-in life cycle management (projects, environments, testing, git, ...), these services will be project upgrades and improvements rather than lift and shift migrations. We'll obviously help customers without a Pentaho/Kettle background to get started with Hop as well. We believe visually developing pipelines on Kafka, Spark, Flink, Google Dataflow, Google BigQuery and many other platforms will be a game changer for data pipeline development, orchestration and maintenance.

Hop will not just be an open source platform. We are working on professional support and services, to make sure your Hop projects and production environments are in good hands. More on that later.

We're excited about the future with Apache Hop, and we sure hope you'll join us on this journey!

About Project Hop

Project Hop (Hop Orchestration Platform) aims to facilitate all aspects of data and metadata orchestration.

Read more:

About The Apache Software Foundation

From Wikipedia:

The Apache Software Foundation (ASF) is an American nonprofit corporation (classified as a 501(c)(3) organization in the United States) to support Apache software projects, including the Apache HTTP Server. The ASF was formed from the Apache Group and incorporated on March 25, 1999.

The Apache Software Foundation is a decentralized open source community of developers. The software they produce is distributed under the terms of the Apache License and is free and open-source software (FOSS). The Apache projects are characterized by a collaborative, consensus-based development process and an open and pragmatic software license. Each project is managed by a self-selected team of technical experts who are active contributors to the project. The ASF is a meritocracy, implying that membership of the foundation is granted only to volunteers who have actively contributed to Apache projects. The ASF is considered a second generation open-source organization, in that commercial support is provided without the risk of platform lock-in.

Among the ASF’s objectives are: to provide legal protection to volunteers working on Apache projects; to prevent the Apache brand name from being used by other organizations without permission.

The ASF also holds several ApacheCon conferences each year, highlighting Apache projects and related technology.

Blog comments