As explained in a previous post, know.bi has been working with Matt Casters (Neo4j) and our growing...

The perfect time to upgrade your Pentaho projects to Apache Hop is now!

Bart Maertens

Pentaho: the rise and fall of a platform

Imagine a couple of years ago. You are carefully exploring the market to make a tool selection for a data engineering platform. The platform you're looking for is innovative, extensible and customizable. You want it to be open source but commercially supported. Your platform of choice needs to have sufficient market adoption and needs to be carried by a significant community of developers and enthusiasts.

Continue to imagine that was a couple of years ago and the platform you meticulously selected was Pentaho Data Integration (or PDI, or Kettle). It definitely was the right choice at the time: PDI had an active and vibrant community of users and developers. We were very heavily involved, good times and fond memories!

Hadoop was happily surfing its way to the top of the hype cycle and PDI integrated perfectly with it. You could visually develop, run and debug data processing on MapReduce, there even was support for a new upcoming Hadoop component called Apache Spark. The future looked bright!

Fast forward to today. Your organization now depends on the PDI project you continue to build and maintain. However, the Pentaho platform, now part of Hitachi Vantara, completely stopped evolving. There still are two releases every year, but the only thing that seems to change is the version number. There are barely any new features and, even worse, the once vibrant community around PDI/Kettle has completely evaporated.

What seemed the perfect choice at the time doesn’t seem to be so perfect right now.

There’s (Apache) Hop(e)!

In late 2019, Matt Casters ((PDI/Kettle project founder and lead architect) and know.bi (Hans Van Akelyen and Bart Maertens) joined forces, forked the PDI/Kettle code and created Project Hop. After about one year of work, the project joined the Apache Software Foundation (ASF) and became Apache Hop (Incubating). Another year later, Apache Hop graduated from the ASF and became a Top-Level project.

A couple of years after the project started, Apache Hop is a well-established and mature project, with contributions from tens of individual developers and organizations. A new and growing community is increasingly excited about everything that happens around Apache Hop, there are frequent releases (5 in 2022 alone!) and tons of new functionalities have been added.

Spoon, the visual development environment in PDI was abandoned and a new GUI was written from scratch to be pluggable and web and cloud ready. There’s life cycle support with projects, environments and git integration. Docker is fully supported, Kubernetes support is making steady progress. Pipelines designed in Hop GUI can run on the native Apache Hop engine (on local and remote configurations), but also on Apache Spark, Apache Flink and Google Dataflow through Apache Beam. Configuration and administration have been rewritten from scratch with unified, easy to use command line tools and lots, lots more!

The only way is up: Upgrade to Apache Hop!

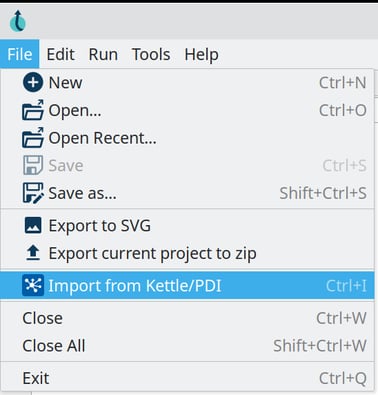

The best news of all for your Pentaho project is that migrating to Apache Hop is straightforward.

The best news of all for your Pentaho project is that migrating to Apache Hop is straightforward.

Even though the Apache Hop development team never intended Apache Hop to be compatible with Pentaho Data Integration, the shared history allows your jobs and transformations to be easily converted into full-blown Apache Hop projects.

Apache Hop treats metadata a lot more stricter than Pentaho. Database connections for example are repeated in each and every job and transformation in Pentaho, but only once per project in Apache Hop. That may require you to do some cleanup after the initial import, but the end result is a cleaner project that is a lot easier to manage and maintain.

There's so much new functionality available after you've moved over to Apache Hop that we refer to the entire process as an upgrade rather than a migration.

Imagine... you can keep your entire Pentaho Data Integration project history and can start innovating again!

We're here to help

We can help you to make the entire upgrade process even smoother: we can check how ready your project is to upgrade to Apache Hop, we can help you in the upgrade process or even perform the entire upgrade for you.

We can help you clean up some of your PDI work to use the optimized, cleaner, and lighter Apache Hop ways of working, to make sure you hit the Apache Hop ground running. As part of the upgrade process, we’ll teach you how to change your working habits and best practices from PDI/Kettle to Apache Hop.

In the upgrade process, you’ll receive a pre- and post-upgrade overview report as well as a detailed recommendations report.

Blog comments